Lottery Tickets in DRL @NERL ICLR Workshop

Date:

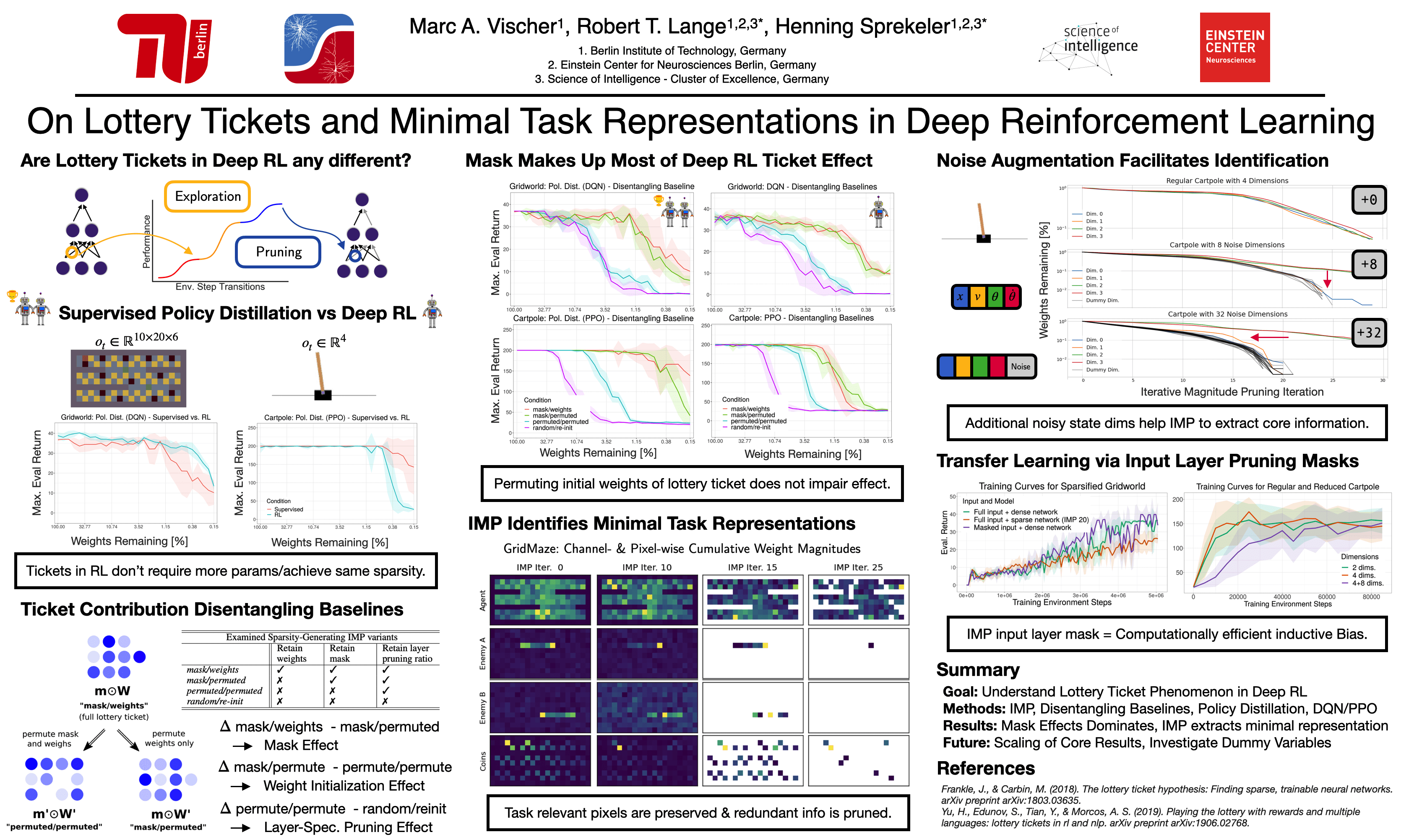

I am very happy to be presenting our recent work “On Lottery Tickets and Minimal Task Representations in Deep Reinforcement Learning” at the ICLR ‘A Roadmap to Never-Ending RL’ workshop. We investigate the lottery ticket phenomenon in Deep Reinforcement Learning and provide evidence that most of the RL ticket effect can be attributed to the discovered pruning mask. Furthermore, the input layer mask discovered by Iterative Magnitude Pruning yields minimal task-sufficient representations. This mask can be used as a pair of “goggles” that compresses the representation. Dense agents trained on such a representation attain comparable performance at lower computational costs.

Checkout the preprint and feel free to drop me a note or hangout at the poster sessions on May, 7th. This is joint work with the phenomenal Master student Marc Vischer and my supervisor Henning Sprekeler.